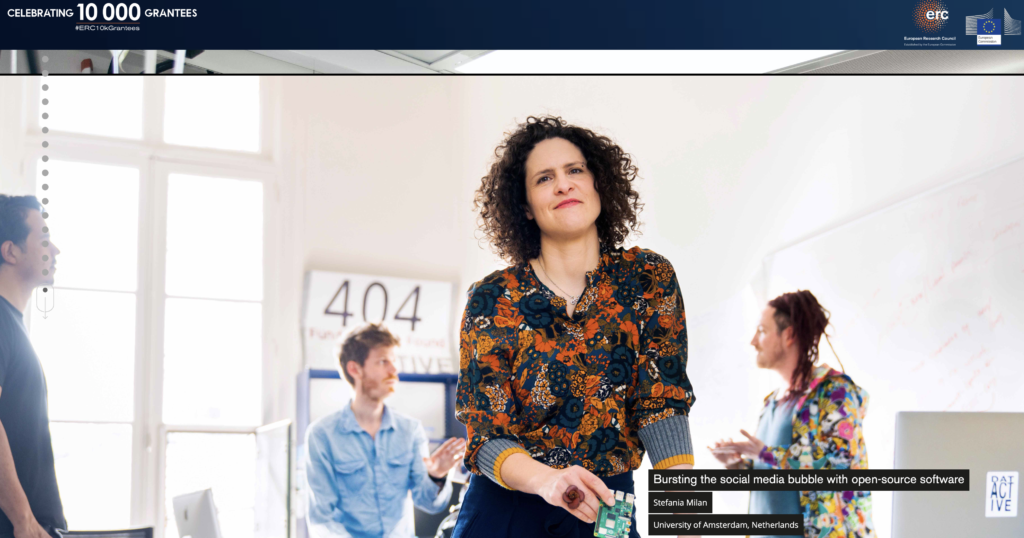

We are nearing the end of the ERC-funded DATACTIVE project and we have many accomplishments to celebrate!

For almost six years now, we have worked together to investigate the complex and multifaceted field of data activist imaginaries and practices. We have had a wonderful time bringing together academics, practitioners, hackers and artists from around the world. We have engaged in numerous interviews, focus groups, participant observation of activist events, and we have even developed our own open-source tools to support our research. Together, we have traced the evolution of a global network of data activists and tried to figure out how institutions are reacting to their mobilization. We have explored the various ways in which publics engage with surveillance regimes and how notions of risk articulate strategies to resist it. We have shed light on the workings of the algorithms that power big tech platforms and located how human rights considerations painstakingly make their way into the infrastructure of the internet. With the inception of the COVID-19 pandemic, we have also devoted our attention to the politics of counting in the first pandemic in a datafied society, the inherent forms of exclusion and the risks of techno-solutionism.

Throughout these six years, the ‘we’ of DATACTIVE has been neither static nor stable. DATACTIVE has been home to no less than 26 people in its core team, plus the many other research associates, interns, and collaborators who visited for period of time and enriched our work with their expertise and unique contributions.

Although the project is coming to an end, we are well aware that our work is not done. We will take our data-activist approach to research to other venues and groups, and continue asking critical questions wherever we will land.

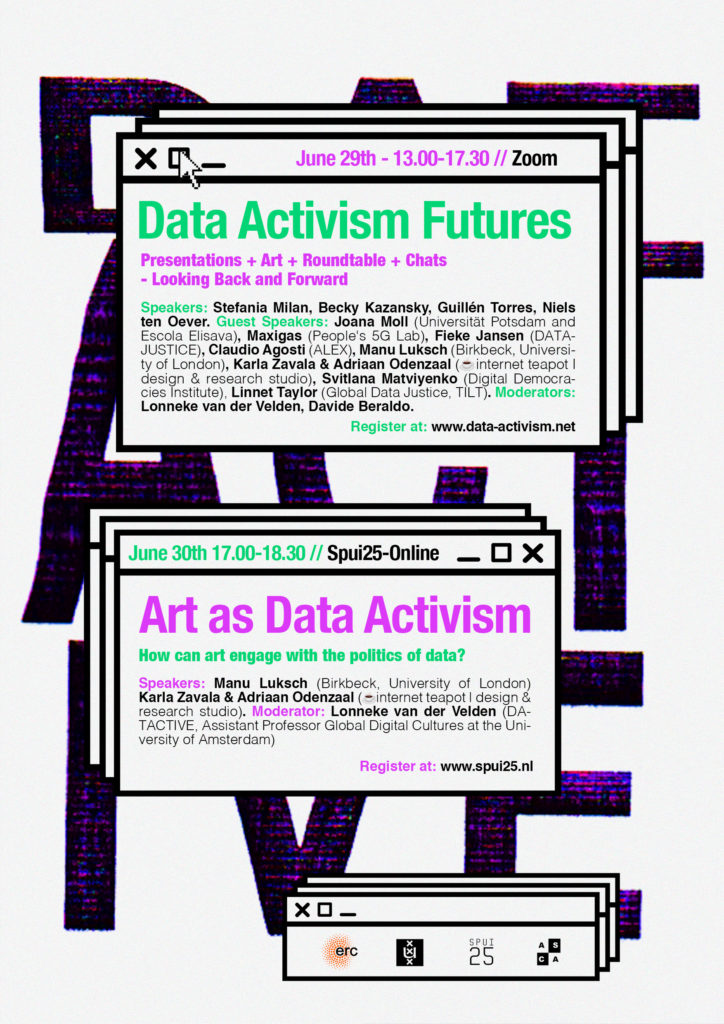

But it is now time for thanks and celebrations! Please join us on Tuesday the 29th of June 2021 for the DATACTIVE closing event entitled DATA ACTIVISM FUTURES, to celebrate looking back and ponder about the future. We have our Principal Investigator Stefania Milan reflecting on five years of data activism, after which the PhD candidates will take central stage. Becky Kazansky will shed light on threat modelling within civil society and grassroots resistance to surveillance, Guillén Torres will present his work on institutional resistance to transparency efforts by citizens, and Niels ten Oever will take us through the politics of infrastructure. Guest speakers include Maxigas (University of Amsterdam), Fieke Jansen (Data Justice Lab, Cardiff University), Claudio (Algorithms Exposed), and Svitlana Matviyenko (Digital Democracies Institute). Davide Beraldo will engage in a discussion with artists Joana Moll, Manu Luksch, Viola van Alphen, Karla Zavala and Adriaan Odenzaal about how art can contribute to the data activism agenda by fostering critical data literacy

The DATACTIVE final event will be followed by a public roundtable discussion on ART AS DATA ACTIVISM scheduled on the next day, Wednesday the 30th of June 2021. Note that each event has its own separate registration process. Find more details below!

DAY 1: DATA ACTIVISM FUTURES

June 29th 13.00-17.30 CEST

The event is broadcasted live from Engage! TV studios and will takes place online. Sign up HERE

PROGRAM

13.00-13.30 5 years of contentious politics of data: What changed? Stefania Milan in conversation with Lonneke van der Velden

13.30-13.40 Launch of DATACTIVE video pills

13.40-14.00 PhD projects pitches: Data activism as a form of…

14.00-14.40 Breakout rooms: Extended PhD presentations + Q&A

Data activism as form of:

1. Institutional resistance (Guillen Torres)

2. Resistance to surveillance (Becky Kazansky)

3. Politics of infrastructure (Niels ten Oever)

BREAK

15.00-15.35 Art as data activism: A conversation featuring Joana Moll, Manu Luksch, ☕️ internet teapot l design & research studio (Karla Zavala & Adriaan Odenzaal), and Viola van Alphen. Moderated by Davide Beraldo

15.35-16.30 Research futures for data activism: A fishbowl discussion with practitioners, featuring Maxigas, Fieke Jansen, Claudio Agosti and Sanne Stevens

16.30-17.00 Data activism futures: Closing remarks. Stefania Milan in conversation with Linnet Taylor

17.00 Thank you & festive moment. Keep your favorite drink at hand!

PARTICIPANTS

From DATACTIVE: [Speakers] Stefania Milan (Associate Professor of New Media and Digital Culture, University of Amsterdam), Guillen Torres (PhD at DATACTIVE, University of Amsterdam), Niels ten Oever (former PhD at DATACTIVE, now postdoctoral researcher at IN-SIGHT, University of Amsterdam), Becky Kazansky (PhD at DATACTIVE, University of Amsterdam). [Moderators] Lonneke van der Velden (DATACTIVE, Assistant Professor Global Digital Cultures at the University of Amsterdam), Davide Beraldo (Senior Lecturer and postdoctoral researcher at DATACTIVE, University of Amsterdam) & Niels ten Oever. [Co-organiser] Jeroen de Vos (DATACTIVE Project Manager, University of Amsterdam).

Guest speakers: Linnet Taylor (Global Data Justice, Tilburg University), Maxigas (People’s 5G Lab, University of Amsterdam), Fieke Jansen (DATA JUSTICE, Cardiff University), Claudio Agosti (Algorithms Exposed), Sanne Stevens (Justice, Equity and Technology Table), Joana Moll (Barcelona/Berlin based artist and researcher, Universität Potsdam and Escola Elisava), Manu Luksch (Artist in Residence at The School of Law, Birkbeck, University of London), Karla Zavala & Adriaan Odenzaal (☕️ internet teapot l design & research studio), and Viola van Alphen (Creative Director and Curator Manifestations@Dutch Design Week. Art, Tech, and Fun!).

DAY 2: ART AS DATA ACTIVISM

June 30th 17.00-18.30 CEST

The event is hosted by Spui25 and takes place online. Sign up HERE

Art as form of political engagement is a proven formula, but what about art as form of data activism? Can art help us better understand and question the politics of everyday data flows? In the current context of datafication -the turning of every aspect of our lives into data points for further processing — artistic practice offers diverse ways to foster public engagement with data. From the early examples of the Net.Art movement to more recent artistic interrogations of automated decision making systems, the speakers in this panel will offer different perspectives on the role of art in questioning the power asymmetries created as a result of the use of data by governments, corporations and platforms.

Speakers: Manu Luksch (Artist in Residence at The School of Law, Birkbeck, University of London) Karla Zavala & Adriaan Odenzaal (☕️ internet teapot l design & research studio), and Viola van Alphen (Creative Director and Curator Manifestations@Dutch Design Week. Art, Tech, and Fun!).

Moderator: Lonneke van der Velden (DATACTIVE and Assistant Professor Global Digital Cultures at the University of Amsterdam)